The fundamentals of Audio Engineering

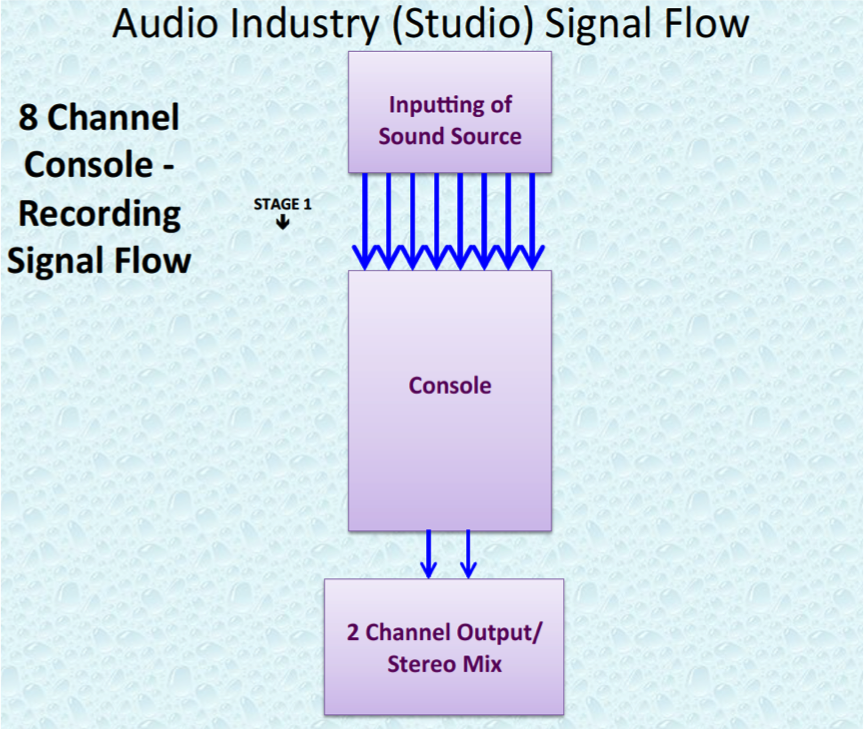

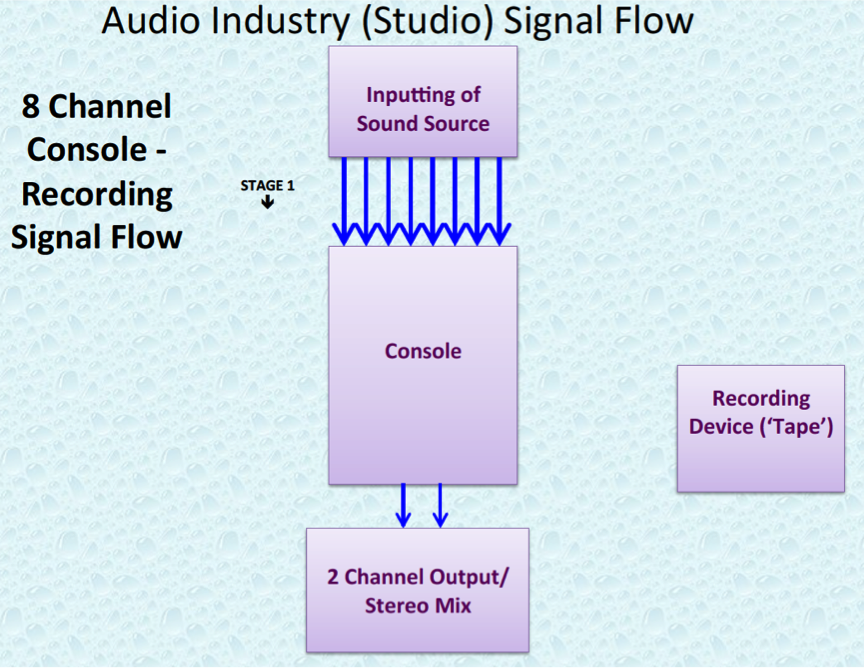

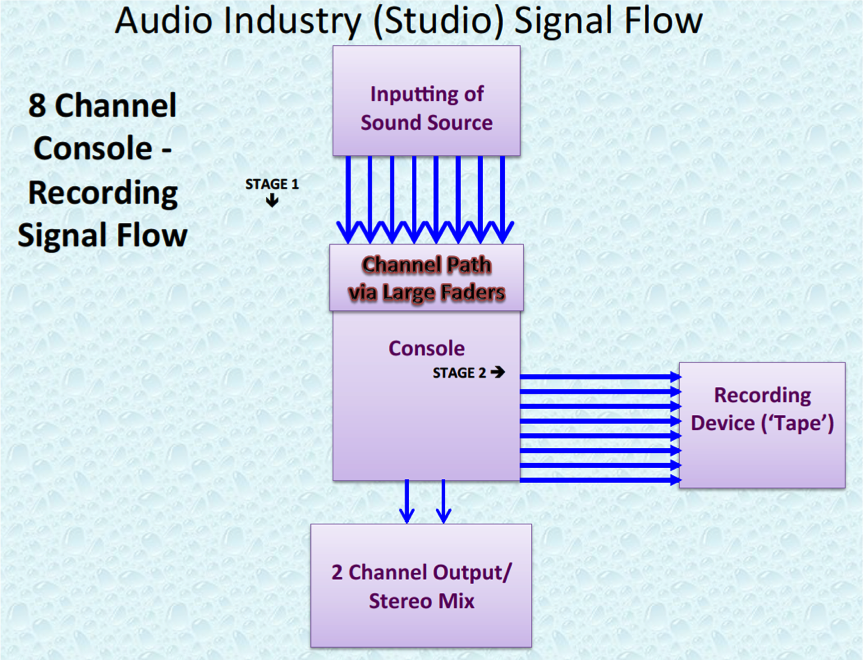

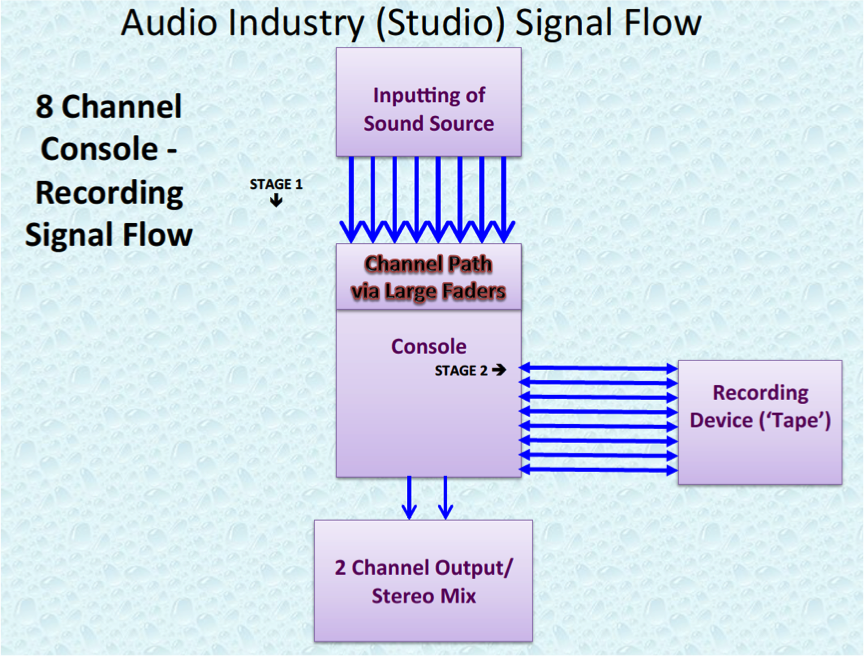

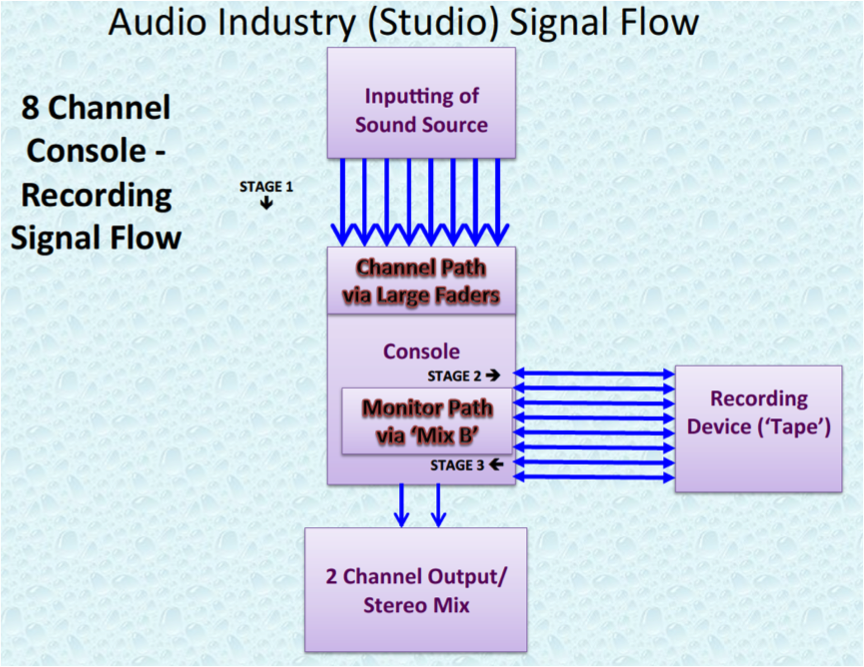

Audio Engineering is dependent upon the key personnel – the engineers – understanding the fundamentals. Signal Flow is one of the core fundamentals of audio engineering. Irrespective of the studio console, an experienced engineer who understands signal flow will adapt very quickly to each and every studio environment, irrespective of whether the studio is analogue, digital or digital virtual in its equipment makeup.

At a very elementary level, there are two phases in Audio Engineering:

-

The Recording phase, as part of the Production process. [NB: the Recording process is also referred to as tracking]

-

The Mixing phase, as part of the Post-Production process